Hardware Acceleration of Explainable Machine Learning

- Jaden Pan

- May 24, 2022

- 1 min read

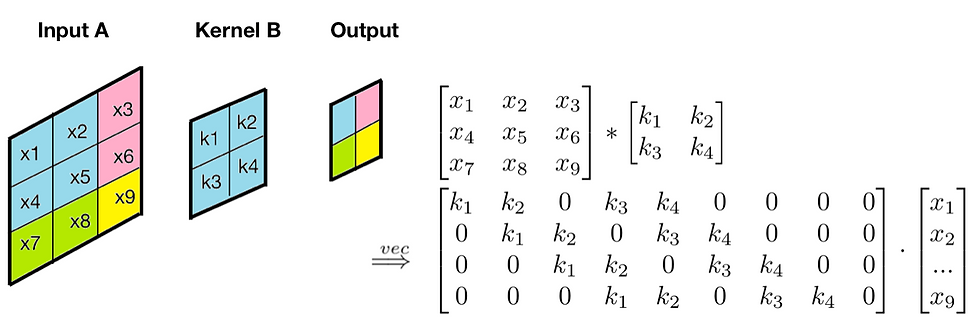

Machine learning (ML) is successful in achieving human-level performance in various fields. However, it lacks the ability to explain an outcome due to its black-box nature. While recent efforts on explainable ML has received significant attention, the existing solutions are not applicable in real-time systems since they map interpretability as an optimization problem, which leads to numerous iterations of time-consuming complex computations. To make matters worse, existing implementations are not amenable for hardware-based acceleration. In this paper, we propose an efficient framework to enable acceleration of explainable ML procedure with hardware accelerators. We explore the effectiveness of both Tensor Processing Unit (TPU) and Graphics Processing Unit (GPU) based architectures in accelerating explainable ML. Specifically, this paper makes three important contributions. (1) To the best of our knowledge, our proposed work is the first attempt in enabling hardware acceleration of explainable ML. (2) Our proposed solution exploits the synergy between matrix convolution and Fourier transform, and therefore, it takes full advantage of TPU's inherent ability in accelerating matrix computations. (3) Our proposed approach can lead to real-time outcome interpretation. Extensive experimental evaluation demonstrates that proposed approach deployed on TPU can provide drastic improvement in interpretation time (39x on average) as well as energy efficiency (69x on average) compared to existing acceleration techniques.

https://ui.adsabs.harvard.edu/abs/2021arXiv210311927P/abstract

Comments